Last night at Boston Azure I teamed up with Jason Haley to cover the current Azure AI topics from the Microsoft-created Season of Azure program. An engaged group showed up at NERD in Cambridge to hear all about it.

Jason Haley’s code and materials are here: https://jasonhaley.com/2024/06/25/boston-azure-june-2024/

For my part, I pulled content from Generative AI for .NET Developers and Getting Started with Azure AI Studio and blended in some of my own. The combined mega-deck is attached to this post, though the deck spans much more than I had time to go through.

If you attended and have not had opportunity to give some feedback to Microsoft, there are only a few quick questions.

Take the survey here: aka.ms/AttendeeSurveySeasonOfAI

Additional Resources

Also complements of the Season of AI team, check out these resources.

Join the Azure AI Community on Discord

Connect with fellow enthusiasts, engage with Microsoft experts and MVPs, discuss your favorite sessions, and delve into AI discussions. Your space to ask, share, and explore!

Get started skilling with AI on Microsoft Learn

Build AI skills, connect with the community, earn Microsoft Credentials, learn from experts, and take the Cloud Skills Challenge.

Download Deck Bill Presented

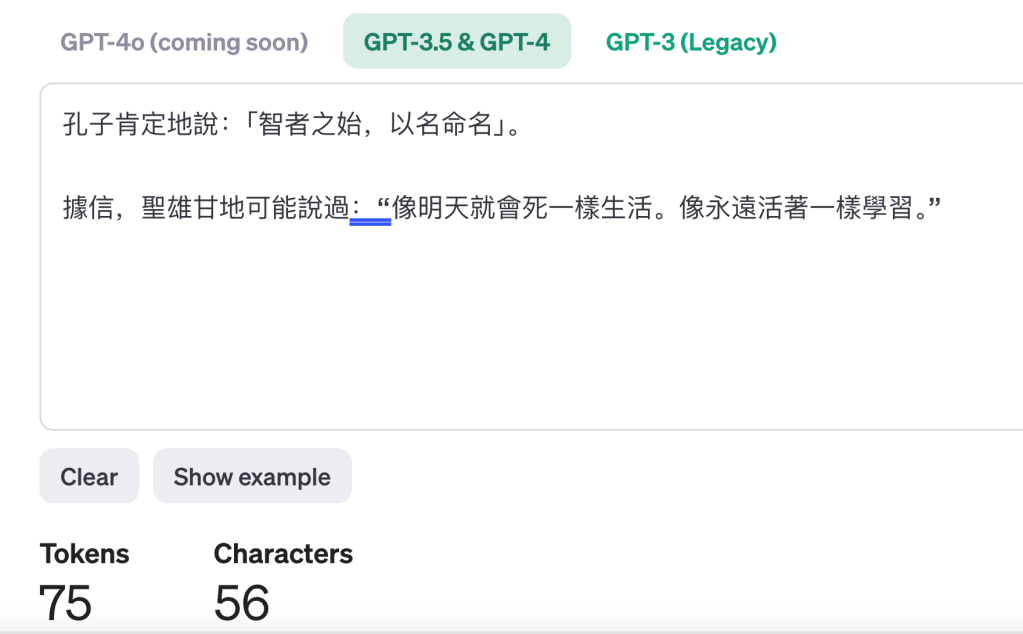

A diagram we spent time on (slightly updated, source here):

And finally, the deck I used follows:

And here are a couple of the links I showed during the talk that got a lot of discussion or attention:

Recall the third one shown – Telugu – was wildly more expensive (in terms of token count) than English (50 tokens) and Chinese (75 tokens) – where Telugu weighed in at 353 tokens.