Q. Can I create a Startup Task that executes only when really in the Cloud? I mean really in the cloud. In other words, can I get my Startup Task to NOT RUN when I debug/deploy my Windows Azure application on my development machine?

Q. Can I create a Startup Task that executes only when really in the Cloud? I mean really in the cloud. In other words, can I get my Startup Task to NOT RUN when I debug/deploy my Windows Azure application on my development machine?

A. The short answer is that while there is no built-in support for this, you can get the same effect by using a simple trick to add logic to your Startup Script to provide sufficient control. Before getting into that, let’s describe the problem in a bit more detail. Update 14-Oct-2011: Stop the presses!! This capability is now built into Windows Azure! Steve Marx has a blog post on the matter. I will leave this blog post around since the details in it may be of value for other scenarios.

Suppose you want to use ASP.NET MVC 3 in your Windows Azure Web Role. At the time of this writing, MVC 2 was installed in Azure, but not MVC 3. What to do? The short answer is, you can install MVC 3 along with your application at deployment time in the cloud. This type of prerequisite installation is most conveniently handled using a Startup Task. The idea is that I include the ASP.NET MVC 3 bits with my app, and define a Startup Task that installs these bits, and I can set things up easily so that these bits are already installed before my Web Role tries to run (via a Simple Startup Task). This is a pretty clean solution. (For more on Startup Tasks and how to configure them see How to Define Startup Tasks for a Role. For specific guidance on installing ASP.NET MVC 3 as a Startup Task, see Technique #2 in the ASP.NET MVC 3 in Windows Azure post on Steve Marx’s blog.)

Example Startup Task That ALWAYS Runs

Of course, installing ASP.NET MVC 3 is only one example. Here is another example – a Startup Task that enables support for ADSI with IIS – let’s call it enable-webmetabase.cmd. First, you would add the following entry to ServiceDefinition.csdef:

<?xml version=”1.0″ encoding=”utf-8″?>

<ServiceDefinition name=”NameOfMyAzureApp” xmlns=”http://schemas.microsoft.com/ServiceHosting/2008/10/ServiceDefinition“>

…

<Startup>

<Task commandLine=”enable-webmetabase.cmd” executionContext=”elevated” taskType=”simple” />

</Startup>

…

The contents of enable-webmetabase.cmd would be something like the following (first enabling PowerShell scripting, then executing a specific script):

powershell -command “Set-ExecutionPolicy Unrestricted”

powershell .\enable-webmetabase.ps1

Though the specifics are not important for these instructions, since this script invokes a PowerShell script – let’s call it enable-webmetabase.ps1 – here is what that might look like:

Import-Module ServerManager

Add-WindowsFeature Web-Metabase

And as a final step, you would include both enable-webmetabase.cmd and enable-webmetabase.ps1 with your Visual Studio Project, and set the Copy to Output Directory property on each of these two files to be Copy always. Now, every time you deploy this Azure solution this Startup Task will be executed – and you can feel confident that you won’t have to worry about ADSI in IIS not being available (or whatever it is your Startup Tasks do for you).

Startup Tasks Run in Development Too

But what happens when I wish to deploy this solution on my development machine so I can quickly test it out while I am in the midst of development? Since the Windows Azure Platform has an outstanding local cloud simulation environment (which can be downloaded for free), “local” is the most common deployment target! It is not ideal that the Startup Tasks will run locally – I do not want to continually install ASP.NET MVC (or re-enable web metabase support, etc.) since that will just slow me down.

The Simple Workaround

I know of no built-in support that makes it easy for a Startup Task to “know” whether it is running in the cloud or in your local development environment. But it is simple to roll your own. Here’s what I do:

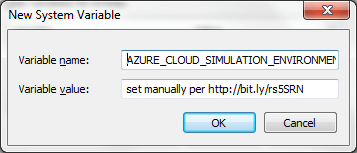

- Create an Environment Variable called AZURE_CLOUD_SIMULATION_ENVIRONMENT. While the exact value of this variable does not matter, for the sake of someone else who may see it and be puzzled, I set mine to be “set manually per http://bit.ly/rs5SRN” where the bit.ly link points back to this blog post. 🙂 It also doesn’t matter if the Environment Variable is user-specific or System-wide. If it is a shared development machine, I would make it System-wide (for all users).

- It is common practice when defining Startup Tasks to create command script using a .cmd file and have that be the Startup Task. Within the Startup Task .cmd file, use the defined keyword (supported in the command shells of recent versions of Windows, such as those you will be using for Azure development and deployment) to add a little logic so that you run only those commands you wish to execute in the current environment.

To set up the AZURE_CLOUD_SIMULATION_ENVIRONMENT environment variable:

- Run SystemPropertiesAdvanced.exe to bring up the System Properties dialog box:

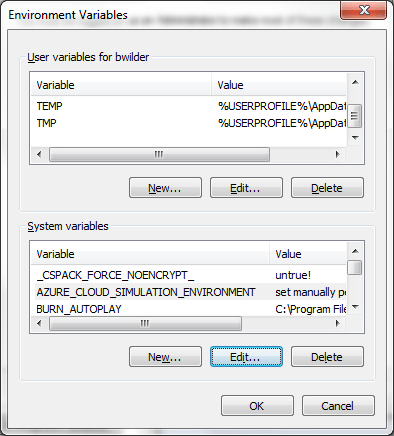

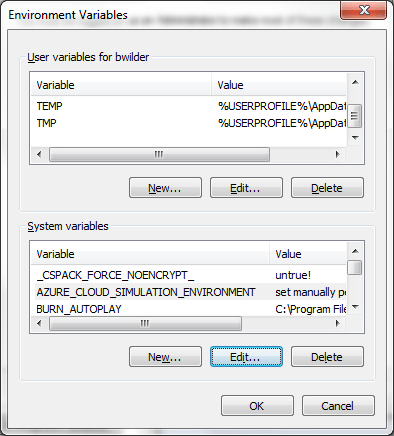

- Click the Environment Variables button to bring up the Environment Variables dialog box:

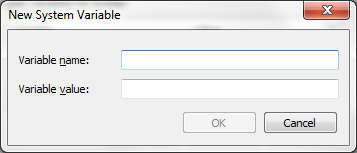

- Click the New… button at the bottom to bring up the New System Variable dialog box:

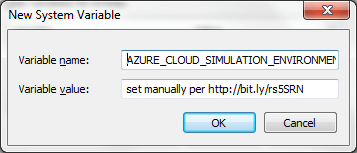

- Type AZURE_CLOUD_SIMULATION_ENVIRONMENT into the Variable name field, and set manually per http://bit.ly/rs5SRN into the Variable value field:

- Hit a few OK buttons and you’ll be done.

Revised “Smart” Startup Task

Of course the trick is that the AZURE_CLOUD_SIMULATION_ENVIRONMENT variable will only be set on development machines, so it will NOT be set in the real cloud, getting you the desired results. Here is the same enable-webmetabase.cmd Startup Task script from above, except rewritten so that when you run it locally it will not do anything to your development machine.

if defined AZURE_CLOUD_SIMULATION_ENVIRONMENT goto SKIP

powershell -command “Set-ExecutionPolicy Unrestricted”

powershell .\enable-webmetabase.ps1

:SKIP

The line “if defined AZURE_CLOUD_SIMULATION_ENVIRONMENT goto SKIP” simply checks whether AZURE_CLOUD_SIMULATION_ENVIRONMENT exists in the environment, and if it does exist, the script jumps over the two powershell lines. This is pretty handy!

Again, in summary, if you follow the very simple approach in this post, the AZURE_CLOUD_SIMULATION_ENVIRONMENT will exist only on development machines – in the simulated cloud – and not out in the “real” cloud.

Not to be Confused with RoleEnvironment.IsAvailable

There is another technique – that is built into Azure – which you can use in code that needs to behave one way when running under Windows Azure, and another way when not running under Windows Azure: RoleEnvironment.IsAvailable. This is good for code that might be deployed both in, say, an Azure Web Role and in a non-Azure ASP.NET web site. For Azure applications, RoleEnvironment.IsAvailable will be true for both the local development machine and when deployed into the public cloud.

While RoleEnvironment.IsAvailable and AZURE_CLOUD_SIMULATION_ENVIRONMENT serve different purposes, they are complementary and can be used together.

For more information on RoleEnvironment.IsAvailable, there is documentation and a good description of its use.

Other Uses for the Technique

Maybe you want to do certain things ONLY in your development environment. For example, perhaps you wish to launch Fiddler. Or maybe uninstall a Windows Service (via InstallUtil /u <service exe name>). Whatever your needs – you can use the same simple technique to make this easy. The following syntax is also supported – each bullet being a single line (though some of them may appear on more than one line in this blog post):

- if defined AZURE_CLOUD_SIMULATION_ENVIRONMENT (echo AZURE_CLOUD_SIMULATION_ENVIRONMENT equals %AZURE_CLOUD_SIMULATION_ENVIRONMENT%) else (echo AZURE_CLOUD_SIMULATION_ENVIRONMENT is NOT defined)

- if defined AZURE_CLOUD_SIMULATION_ENVIRONMENT echo DOING SOMETHING

- if NOT defined AZURE_CLOUD_SIMULATION_ENVIRONMENT echo DOING SOMETHING ELSE

Is this useful? Did I leave out something interesting or get something wrong? Please let me know in the comments! Think other people might be interested? Spread the word!

When deploying an application or service to Windows Azure, a public IP address is assigned, making it easy to host a web server, API, or other services. Here are some of the more frequently asked questions asked about these IP addresses.

When deploying an application or service to Windows Azure, a public IP address is assigned, making it easy to host a web server, API, or other services. Here are some of the more frequently asked questions asked about these IP addresses.

I can’t find any official stats on how much drift happens generally (though

I can’t find any official stats on how much drift happens generally (though